The threat detection gap in enterprise security keeps widening. Attackers move faster, stay quieter, and exploit behavioral blind spots that signature-based tools were never designed to close.

That structural reality is why machine learning intrusion detection startups have moved from niche innovators to enterprise budget priorities — and why the platforms they build are reshaping what effective intrusion detection looks like in practice.

This guide addresses one core question: how should security leaders evaluate and deploy ML-based intrusion detection in 2026? It covers the technology mechanics, the leading platforms, the honest limitations, and the evaluation framework that separates useful deployments from expensive underperformers.

How Machine Learning Intrusion Detection Works

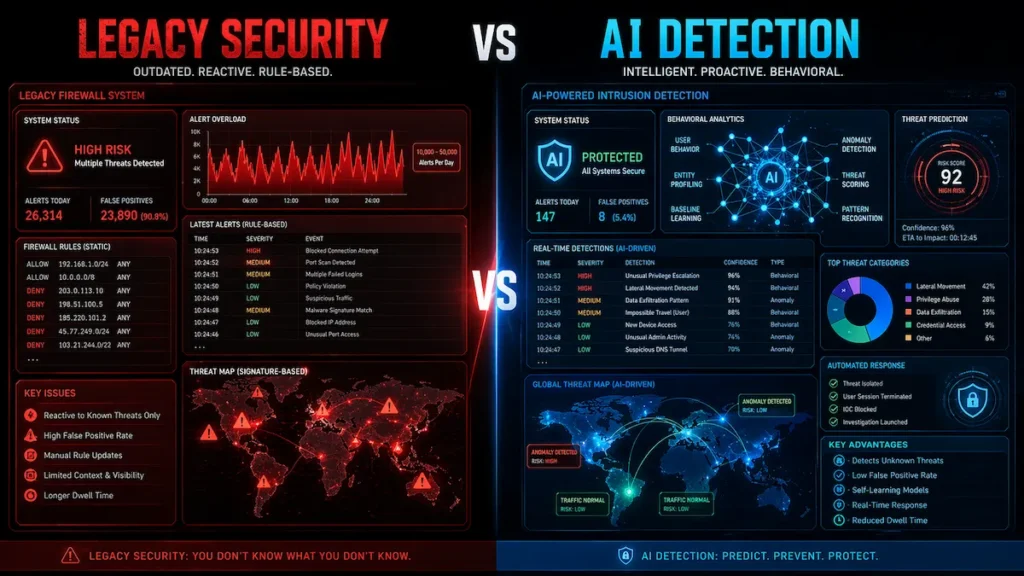

Machine learning intrusion detection startups redefine how modern threat detection systems operate. Traditional intrusion detection matches network traffic against a database of known attack signatures. For catalogued, well-documented threats, this works adequately. Against zero-day exploits, living-off-the-land techniques, and patient credential-based intrusions, it typically fails before meaningful damage is contained.

The limitation is architectural — not incremental.

Signature-based detection is permanently reactive. It recognizes only what has already been documented. An attacker who obtains valid credentials, authenticates normally, and quietly enumerates internal file servers over several days rarely triggers a single rule. Each individual action appears legitimate. The intrusion often goes undetected until well after the damage is done.

Machine learning intrusion detection approaches the same situation differently.

Rather than matching known patterns, it models user and system behavior over time to detect deviations from established baselines. Those deviations become detection candidates — regardless of whether the underlying technique has ever been documented before.

A user whose access scope, authentication timing, and data movement have shifted sharply from 18 months of established history stands out immediately. A high-confidence alert fires with context already attached — before exfiltration begins.

That behavioral model is the core architectural distinction separating what AI-based platforms detect from what rule-based tools routinely miss. One asks whether a pattern matches a known rule. The other asks whether behavior has deviated from an established norm in ways consistent with threat activity. These are fundamentally different questions — and they produce fundamentally different detection outcomes.

Why Alert Noise Made Change Inevitable

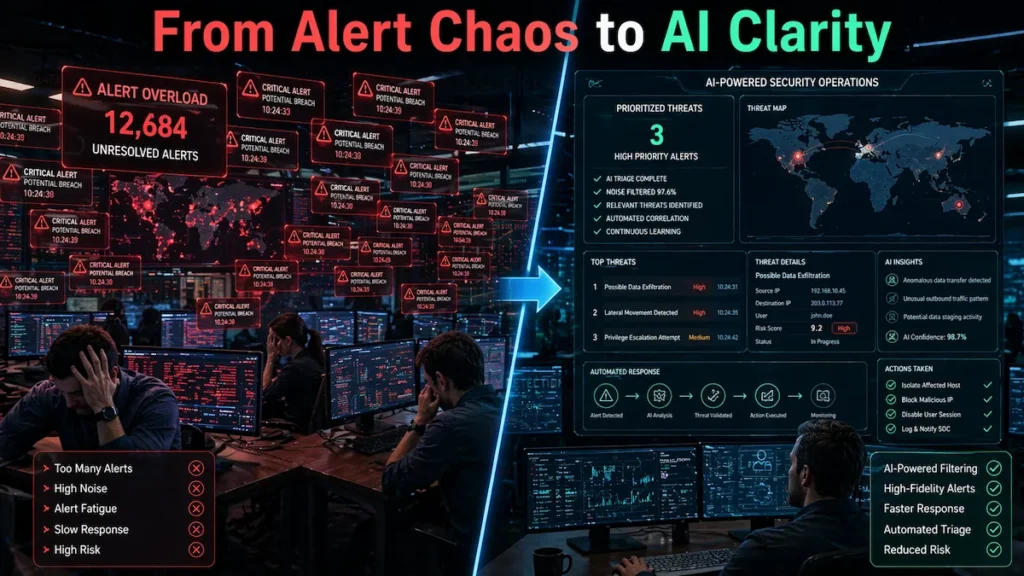

Machine learning intrusion detection startups help reduce alert noise in high-volume SOC environments. Beyond missed detections, rule-based tools created a serious secondary problem: noise.

A large enterprise SOC typically receives 10,000 to 50,000 security alerts per day. The overwhelming majority resolve as false positives. Analysts spend most of their shift chasing benign events while genuine threats sit unexamined in the queue behind them.

AI-powered threat detection tools address this structurally. By learning each environment’s specific behavioral fingerprint, they reduce false positive rates across documented deployments while improving detection confidence on real threats — giving analysts fewer alerts that actually warrant attention.

ML Approaches Used in Production Platforms

The strongest machine learning intrusion detection startups layer multiple detection methods simultaneously rather than relying on any single architecture.

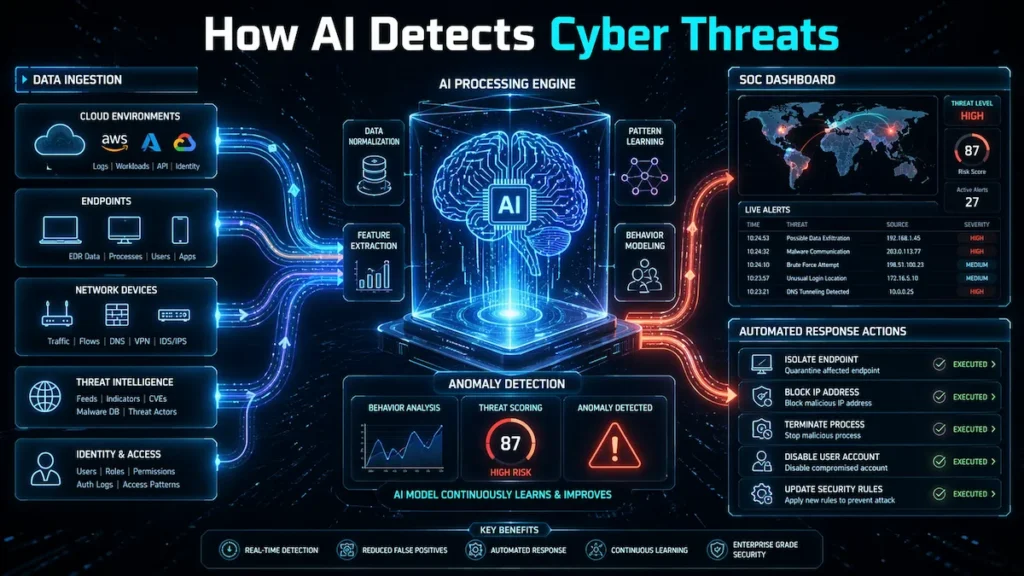

Unsupervised models — isolation forests, autoencoders, clustering algorithms — identify behavioral outliers without requiring labeled attack data. High-quality labeled datasets are scarce and often fail to represent the specific behavioral patterns of any given environment. Unsupervised approaches address that gap directly.

Supervised models trained on historical incidents learn to recognize specific threat categories: lateral movement sequences, credential abuse, command-and-control communication, data staging behavior.

Ensemble and reinforcement learning methods combine multiple model outputs and refine detection logic continuously based on analyst feedback — improving accuracy measurably with every resolved alert over time.

Layering all three is what allows mature platforms to maintain reliable coverage across a broad and constantly shifting threat landscape. That said, results depend heavily on telemetry quality and the consistency of behavioral data across the monitored environment.

Why This Category Is Growing

Machine learning intrusion detection startups are driving a major shift in enterprise cybersecurity strategies. Legacy vendors built their products for a different era. On-premise infrastructure. Clearly defined perimeters. A threat landscape that moved slowly enough for human rule-writers to keep pace.

Those conditions no longer hold.

Machine learning intrusion detection startups — including both high-growth emerging platforms and late-stage vendors defining the category — are filling the structural gap with tools built specifically for hybrid cloud environments, distributed workforces, and adversaries that increasingly operate at machine speed.

The Market Drivers Behind Adoption

Machine learning intrusion detection startups address the limitations of rule-based security systems.

Rule-based SIEMs and signature firewalls share a defining flaw: every detection rule requires a human to write it, maintain it, and update it as threats evolve. That process is inherently reactive — and the gap between emerging attack techniques and updated rule libraries tends to widen over time, not shrink.

Breach dwell time illustrates the consequences. Without behavioral detection, attackers inside enterprise networks can remain undetected for extended periods — moving laterally, escalating privileges, staging data — while each action appears legitimate to a system built only to recognize what it already knows.

For teams operating with limited headcount, this exposure compounds quickly. AI network security monitoring for small teams addresses this directly, delivering detection coverage that would otherwise require SOC staffing levels most organizations cannot realistically sustain.

The global AI in cybersecurity market is projected to grow from $22.4 billion to over $60 billion by 2028, at a compound annual growth rate of nearly 22%. MarketsandMarkets ML-based intrusion detection sits at the center of that growth — not as a feature addition, but as a foundational platform shift.

Zero trust security architecture, as defined in NIST SP 800-207, further amplifies this demand. Continuous behavioral verification — the operational core of zero trust — works in close architectural alignment with modern ML intrusion detection, making these two investments complementary rather than competing.

Real-World Scenario: How ML Detection Stops a Multi-Stage Breach

Machine learning intrusion detection startups demonstrate real-world effectiveness in stopping complex attacks.

This walkthrough illustrates where ML-based detection typically intervenes in ways that rule-based tools structurally cannot replicate.

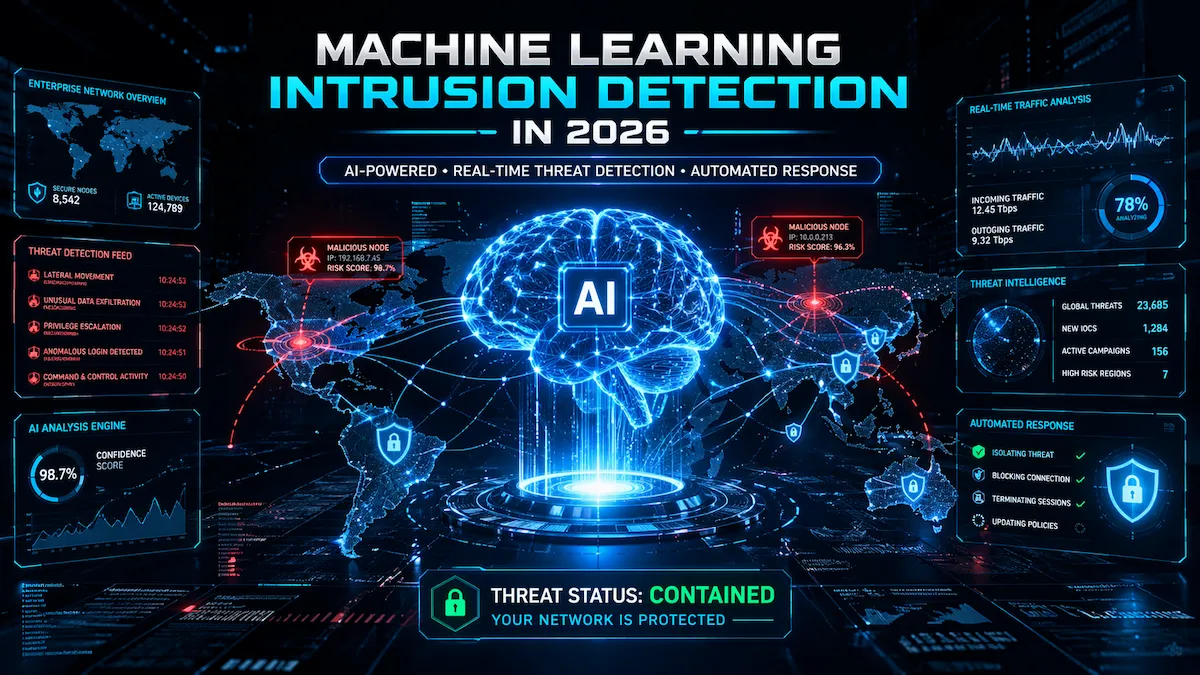

Stage 1 — Credential Theft. A targeted spear-phishing email compromises a senior IT administrator’s credentials. No malware deployed. No signature triggered. The attacker authenticates using valid credentials — an entry method that typically does not surface through rule-based detection alone.

Stage 2 — Lateral Movement Detected. The attacker enumerates Active Directory for privileged accounts and begins accessing systems this administrator has not touched in 18 months.

The ML platform detects behavioral deviation across multiple dimensions simultaneously: unusual access scope, authentication pattern shift, and network query behavior inconsistent with established history. A high-confidence alert fires — connecting the credential anomaly, unusual access pattern, and privilege escalation attempt into a single coherent incident with a complete timeline attached.

Stage 3 — Automated Containment. Before any analyst reviews the alert, the automated response layer isolates the compromised endpoint and revokes suspicious session tokens. Extended dwell time has been significantly compressed.

AI endpoint security platforms close the final operational gap here — translating network-layer detection signals into the endpoint-level containment actions that actually stop attacker movement from progressing further.

One important caveat: this scenario assumes clean, comprehensive telemetry across network, identity, and endpoint layers. In environments with telemetry gaps — partial cloud coverage, unmonitored legacy segments, incomplete identity logging — detection fidelity degrades accordingly. The quality of what goes into the platform directly determines the quality of what comes out.

ML Intrusion Detection Platform Landscape: A Neutral Market Overview

Machine learning intrusion detection startups vary in architecture, coverage, and operational tradeoffs.

The following represents an overview of notable platforms in this space. Inclusion does not constitute endorsement. Independent validation varies across vendors, and results depend heavily on deployment environment and telemetry quality. Enterprise buyers should conduct independent evaluation against their specific requirements.

Rather than feature comparisons, what matters for enterprise selection is where each platform performs well — and where it introduces operational tradeoffs worth understanding before committing.

Platform Overview by Detection Architecture

Machine learning intrusion detection startups differentiate through their unique detection architectures.

Vectra AI — Network-First Behavioral AI

Machine learning intrusion detection startups like Vectra focus on high-confidence behavioral threat detection.

Vectra focuses on post-compromise attacker behavior across network, identity, and cloud layers. Its Attack Signal Intelligence approach prioritizes detecting attacker behavior patterns over broad anomaly flagging. Vectra AI was recognized as a Leader in the 2025 Gartner Magic Quadrant for NDR, with its targeted attacker behavior models cited for substantially reducing alert noise across the full cyber kill chain. Vectra AI

Notable tradeoff: the targeted behavioral model may generate fewer early-stage anomaly detections than broader unsupervised platforms — which can be an advantage for teams prioritizing precision, or a limitation for those prioritizing broad early-warning coverage. Benchmarks are not standardized across vendors, so claimed noise-reduction figures should be validated in your specific environment.

Typically suited for enterprises that prioritize high-confidence alert quality over broad anomaly coverage, and mid-market teams seeking managed detection and response capabilities.

Darktrace — Unsupervised Self-Learning AI

Machine learning intrusion detection startups such as Darktrace emphasize unsupervised behavioral analysis.

Darktrace models each organization’s unique behavioral “pattern of life” from initial deployment using unsupervised machine learning to establish baselines without requiring pre-labeled data.

Its detection architecture combines unsupervised and probabilistic methods with supervised ML and large language models for triage and investigation, and includes an Autonomous Response capability that can take network-level containment actions without human intervention. Medium

Notable tradeoff: the broad sensitivity that catches subtle novel threats can also generate higher alert volumes requiring active tuning investment to manage effectively. In regulated environments, autonomous response actions may require additional governance controls before deployment. Thoma Bravo’s $5.3 billion acquisition in 2024 enabled accelerated product investment and geographic expansion. Medium

Typically suited for organizations seeking rapid broad-coverage deployment across network, cloud, email, and identity with minimal initial configuration overhead.

ExtraHop Reveal(x) — Wire-Data Analytics

ExtraHop focuses on network visibility depth, with particular strength in encrypted traffic analysis environments. Its platform analyzes all network traffic at the packet level, providing agentless visibility into encrypted flows without requiring decryption, while simultaneously delivering network performance monitoring in a single platform. GBHackers

Notable tradeoff: deep packet inspection at scale introduces infrastructure overhead that buyers should model carefully. Additionally, platform effectiveness is dependent on network sensor placement — coverage gaps in distributed or multi-cloud environments should be assessed during proof-of-concept.

Typically suited for financial services and healthcare environments with complex east-west traffic and regulatory constraints around decryption.

Cybereason — Hybrid XDR Correlation

Cybereason maps behavioral sequences to the MITRE ATT&CK framework, surfacing complete attack narratives rather than isolated events. The approach prioritizes investigation context — connecting initial access through lateral movement to data staging in a single incident view.

Notable tradeoff: attack-story correlation requires reliable telemetry across endpoint, network, and identity layers to function effectively. Partial telemetry coverage produces incomplete narratives and can create gaps in attack visibility. Performance in heavily siloed environments should be validated before full deployment commitment.

Typically suited for complex hybrid enterprises where reducing mean-time-to-investigate is a primary operational objective.

Corelight — Open Network Intelligence

Corelight produces structured, ML-enriched network telemetry that feeds existing SIEM and XDR stacks rather than replacing them. No proprietary detection model imposed. No vendor lock-in on how the data gets operationalized downstream.

Notable tradeoff: the open architecture that gives mature teams full detection ownership also means detection effectiveness is heavily dependent on the engineering capacity and threat-hunting maturity of the team using it. Organizations without dedicated detection engineering resources may find the platform underutilized.

Typically suited for mature security engineering organizations that want analyst-grade network data as the foundation for custom detection development.

Quick Reference Matrix

| Platform | Detection Approach | Primary Coverage | Typically Suited For |

|---|---|---|---|

| Vectra AI | Behavioral AI + attacker mapping | Network, identity, cloud | High-volume SOC environments |

| Darktrace | Unsupervised self-learning AI | Network, cloud, email, OT | Rapid deployment, broad coverage |

| ExtraHop Reveal(x) | Wire-data analytics + ML | Network, encrypted traffic | Compliance-heavy verticals |

| Cybereason | Attack story correlation | Endpoint + network XDR | Hybrid enterprise environments |

| Corelight | Open network evidence + ML | Network telemetry | Mature security engineering teams |

For a structured side-by-side evaluation across pricing, deployment complexity, and integration depth, the startup cybersecurity software comparison guide provides a practical decision framework. A broader market overview is available in the best AI security tools for startups guide.

Operational Advantages — With Grounded Production Caveats

Machine learning intrusion detection startups deliver measurable operational improvements across documented enterprise deployments. They also carry limitations that honest buyers need to weigh before committing.

Detection of novel threats is the most significant strategic advantage. ML systems detect behavioral deviation rather than known signatures — meaning they can often identify undocumented attack techniques, including those exploiting vulnerabilities listed in CISA’s Known Exploited Vulnerabilities catalog, from behavioral signals before patches are widely deployed or signatures written.

Reduced false positives directly expand analyst operational capacity. According to available industry data, AI security systems can reduce false positives by 60–75% in documented deployments, with organizations reporting SOC analysts handling significantly more legitimate alerts with equivalent headcount. All About AI Results vary based on environment complexity, telemetry completeness, and ongoing model tuning.

Faster detection cycles change breach outcomes in measurable ways. AI-driven security has been associated with substantially reducing average breach detection time — limiting the operational damage scope attackers can achieve during extended dwell periods. All About AI These figures represent averages across organizations with varying baseline maturity and should not be treated as guaranteed outcomes.

Where These Platforms Face Documented Limitations

Adversarial evasion is a genuine and growing production concern. Sophisticated attackers deliberately drift behavior incrementally to remain within evolving baselines — exploiting the adaptive quality that gives ML detection its strength. Slow-walk behavioral drift and training data poisoning are active real-world techniques, not theoretical edge cases. Independent red-team validation against evasion scenarios should be a non-negotiable evaluation requirement.

Model drift in production environments creates detection gaps that are rarely disclosed proactively. As organizational behavior evolves — through mergers, restructuring, new application deployments, or remote work expansion — behavioral baselines require active maintenance. Platforms that do not proactively manage model drift will develop coverage gaps over time that neither the vendor nor the customer easily detects.

False negatives in regulated and specialized environments remain a documented production risk. OT networks, algorithmic trading infrastructure, research institutions, and healthcare clinical environments all exhibit behavioral profiles that differ substantially from the enterprise IT environments most models are trained on. General-purpose behavioral models may underperform significantly in these contexts without deep customization.

Telemetry dependency is the most underacknowledged limitation. Every claim about detection accuracy is contingent on the quality, completeness, and consistency of the data ingested. Partial cloud coverage, unmonitored network segments, and incomplete identity logging all degrade detection fidelity in ways that vendor benchmarks rarely reflect.

Challenges Facing the Category

Adversarial machine learning warrants more buyer scrutiny than most vendor materials typically provide. Slow-walk behavioral drift campaigns and training data poisoning are active techniques used by sophisticated threat groups — not theoretical scenarios. Ask vendors for independent red-team validation results, not internal benchmark performance against pre-labeled attack datasets.

Training data gaps create silent coverage weaknesses. A model performing well on standard benchmarks may underperform in environments whose behavioral profiles differ substantially from its training distribution. Proof-of-concept engagements should include simulated attacks tailored to your specific environment and verified against your actual threat model.

Integration complexity consistently exceeds vendor estimates. Connecting ML detection platforms to legacy SIEMs, on-premise appliances, and multi-cloud environments requires engineering effort that standard onboarding timelines routinely understate. AI cloud security solutions with native cloud provider telemetry integration can meaningfully reduce this deployment burden by removing one of the most consistently problematic integration layers.

Governance requirements in regulated environments add deployment complexity that is rarely surfaced during vendor sales cycles. Autonomous response capabilities, data residency requirements, and model explainability standards required by financial regulators and healthcare compliance frameworks can significantly constrain how these platforms are deployed in practice.

Buyer Evaluation Checklist

Machine learning intrusion detection startups should be evaluated based on performance and integration depth.

Move beyond vendor demonstrations. Assess each platform across these specific dimensions before committing:

Detection Performance

Machine learning intrusion detection startups must demonstrate strong detection accuracy in real environments.

- What is the documented false positive rate in live production environments — not benchmark tests designed by the vendor?

- What is detection coverage for MITRE ATT&CK techniques most relevant to your specific threat model?

- Does performance hold consistently across cloud, on-premise, and hybrid configurations?

- How does the platform handle model drift as organizational behavior evolves over time?

Integration Depth

- Which SIEM, SOAR, and EDR platforms integrate natively versus requiring custom API development?

- What telemetry gaps exist in your specific environment, and how does the platform handle incomplete coverage?

- What is the realistic deployment timeline based on reference customers of comparable infrastructure complexity?

Adversarial Resilience

- Has the detection model been independently red-teamed against slow-walk and behavioral drift evasion techniques?

- How does the platform detect threats in environments where attacker behavior deliberately mimics legitimate user patterns?

Governance and Compliance

- What explainability capabilities exist for model decisions — and do they meet your regulatory requirements?

- Do autonomous response capabilities align with your organization’s incident response governance framework?

Total Cost at Scale

- What does pricing look like at 2x and 3x your current asset or traffic volume?

- Are professional services, integration engineering, and ongoing tuning costs fully included in vendor estimates?

The Investment Case

The structural investment thesis behind machine learning intrusion detection startups holds across market cycles for reasons that go beyond headline growth projections.

Cybersecurity spend is operationally non-discretionary in a way that most enterprise software categories are not. Organizations typically renew security software during economic downturns because breach costs — regulatory penalties, operational disruption, reputational damage, recovery expenses — have consistently exceeded prevention costs by multiples that are difficult to argue against in budget conversations.

SaaS delivery models create compounding retention dynamics. Net revenue retention at leading AI security platforms consistently exceeds 120%, driven partly by infrastructure growth within accounts and partly by the switching costs of replacing a deployed behavioral platform that has accumulated 18 months of environmental baseline data. That embedded stickiness is structurally valuable and difficult for new entrants to erode quickly.

The technology moat compounds over time. Effective ML intrusion detection requires proprietary threat signal data accumulated across years of customer deployments, specialized talent at the intersection of ML research and security operations, and continuous model refinement from real-world attack telemetry. Platform consolidation, vertical specialization, and ecosystem partnerships have all accelerated as established players move to lock in behavioral data advantages that make their detection models progressively more difficult to replicate. Mordor Intelligence

Early market position in this category is more defensible than in most pure-software markets — a dynamic reflected clearly in where serious capital is currently flowing.

Where the Category Is Heading: The AI SOC Architecture Model

When evaluating machine learning intrusion detection startups, a four-layer operational framework provides more useful guidance than comparing feature lists in isolation.

Layer 1 — Data Ingestion Network telemetry (NDR), endpoint events (EDR), identity and directory logs, cloud activity, and external threat intelligence feeds. The quality and completeness of ingestion data sets the ceiling for everything that follows — and telemetry gaps at this layer propagate silently through every layer above it. Platform decisions here carry the most irreversible downstream consequences.

Layer 2 — Detection (ML-IDS) Behavioral analytics engines, anomaly detection models, and deep learning components process ingested data to surface deviations from established baselines. This is the primary value creation layer where machine learning intrusion detection startups most meaningfully differentiate. Evaluate on detection accuracy, false positive rate, MITRE ATT&CK technique coverage, model drift management, and performance validated in your specific environment — not vendor-controlled benchmarks.

Layer 3 — Response (SOAR/XDR) Automated playbooks execute containment and remediation for high-confidence detections. Human analysts handle escalations and situations requiring contextual judgment. The maturity and governance framework of this layer largely determines how quickly detection capability translates into actual operational impact — and how much organizational risk automated response actions introduce.

Layer 4 — Intelligence Incident findings feed back into model training, continuously enriching detection logic. External intelligence from CISA’s Known Exploited Vulnerabilities catalog and the MITRE ATT&CK knowledge base supplements internal behavioral data with broader threat context that no single organization can independently observe at scale.

Assess each platform across all four layers — not just its detection capabilities in isolation. Integration quality between layers is often what determines real-world effectiveness more than any individual feature.

Autonomous SOC: The Near-Term Trajectory

Gartner projects that by 2028, multi-agent AI in threat detection and incident response will grow from roughly 5% to 70% of AI security applications Lakera — a transition that will materially reshape SOC staffing models and redefine what security operations roles require day-to-day.

The SOC analyst’s role is actively evolving from detection to validation and response orchestration. The old question — “did this traffic match a known rule?” — is being replaced by a more sophisticated one: “has this system’s behavior deviated from its established baseline in ways consistent with active threat activity?” That is a fundamentally different operating model. It requires different tools, different workflows, different analyst skills, and a different organizational approach to security architecture altogether.

Frequently Asked Questions

Machine learning intrusion detection startups raise important questions for enterprise security teams.

Q1: What is the main difference between traditional IDS and machine learning intrusion detection?

Machine learning intrusion detection startups take a fundamentally different approach compared to traditional IDS. While legacy systems rely on matching known attack signatures, machine learning intrusion detection startups analyze user and system behavior over time to detect anomalies, making them more effective against previously unseen or evolving threats.

Q2: How long does it typically take to deploy an ML intrusion detection platform?

Cloud-native SaaS platforms can become operationally active within weeks, while on-premise or deeply integrated enterprise deployments typically take several months. Integration with legacy SIEMs, SOAR platforms, and multi-cloud telemetry sources is consistently the primary factor extending timelines beyond initial vendor estimates — often significantly so.

Q3: Can ML intrusion detection systems be evaded by sophisticated attackers?

Yes — adversarial evasion is a documented and growing production concern. Sophisticated actors use behavioral drift techniques to stay within learned baselines over time. Buyers should require independent red-team validation results against evasion scenarios before making any platform commitment — not just vendor-provided benchmark data.

Q4: Which industries benefit most from ML-based intrusion detection?

Financial services, healthcare, and critical infrastructure tend to benefit most given high breach costs, regulatory complexity, and large distributed attack surfaces. That said, any organization managing sensitive data across hybrid cloud environments can gain measurably from behavioral detection — provided telemetry coverage is sufficiently complete to support accurate baseline modeling.

Q5: How do these platforms reduce false positives in practice?

Machine learning intrusion detection startups reduce false positives by combining unsupervised and supervised models that learn the normal behavior of each environment. Over time, these systems refine detection accuracy based on feedback, allowing machine learning intrusion detection startups to filter out benign activity and prioritize high-confidence threats more effectively.

Q6: What should enterprises prioritize when evaluating ML intrusion detection platforms?

Start with detection accuracy validated in your specific production environment — not vendor-designed benchmarks. Then assess integration depth with your existing stack, telemetry completeness, governance alignment for autonomous response actions, total cost modeling at scale, and realistic deployment timelines verified against comparable reference customer deployments.

Conclusion

Machine learning intrusion detection startups are redefining how organizations approach modern cybersecurity.

The security industry spent two decades building detection tools around a model that has increasingly struggled to keep pace. Signature matching cannot track an attack landscape that evolves faster than any rule library can follow — and that limitation is structural, not something incremental investment in legacy tooling will resolve.

Machine learning intrusion detection startups are, in many modern enterprise environments, actively beginning to displace that model — with behavioral systems that model user and system behavior over time, surface threats that have never been previously documented, and automate initial response workflows at a speed that manual processes often cannot match at scale.

These platforms are not uniformly mature, uniformly accurate, or uniformly straightforward to deploy. Telemetry coverage, model drift management, adversarial resilience, and governance alignment all determine whether a deployment delivers on its promise or simply moves the detection gap from one layer to another.

The four-layer architecture model, vendor tradeoff analysis, and evaluation checklist in this guide exist to support exactly that kind of evidence-based, environment-specific selection process.

The structural shift is worth stating clearly: security operations are moving from deterministic rule enforcement to continuous probabilistic inference — from “did this match a known pattern” to “has this behavior deviated in ways consistent with threat activity.” That evolution changes not just the tools enterprises deploy, but the skills their analysts need, the governance frameworks their compliance teams require, and the architectural decisions their security leaders make.

In most organizations today, the decision is no longer whether ML-based intrusion detection belongs in the security stack. The question is which failure mode you are willing to accept — blind spots, or noise — and which platform gives you the operational leverage to manage that tradeoff on your terms.

Explore related guides: Best AI Security Tools for Startups 2026 · AI-Powered Threat Detection Tools · Zero Trust Security Tools Guide 2026 · AI Cloud Security Solutions 2026 · AI Endpoint Security Full Guide · Startup Cybersecurity Software Comparison 2026

1 thought on “Machine Learning Intrusion Detection Startups: 10 Companies Transforming Cybersecurity”